by Maciej Pęśko

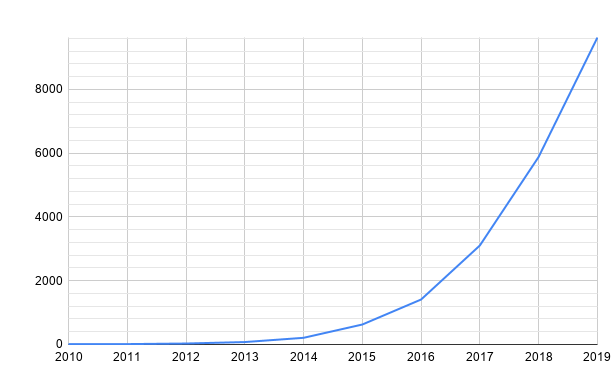

In a few recent years we observed outstanding progress in the Deep Learning area. The number of research papers regarding Deep Learning published on arxiv.org each year grows exponentially what can be seen in the figure below. This would not have been possible without a similar increase in computing power of modern GPUs that are crucial to train all state-of-the art AI models. Particularly interesting from the VFX industry perspective is the possibility of using AI in Computer Vision and a significant number of visual effects problems that can be solved. One could venture that we are witnessing a transformation that has not been seen since the CGI revolution.

Generative AI

One of the latest technology advances are so-called Generative Models that are able to produce super realistic image or video results in a variety of fields like semantic image synthesis presented in [1]. Mentioned work presents Neural Network that can create different, high quality pictures based only on semantic masks. Similar approach could be used to synthesize whole high resolution video sequences and spare a lot of time and money by VFX companies. Let’s imagine that some chosen video shot, i.e. from The Last Jedi (animation below) could be constructed only based on the simple semantic mask and some small database of graphics of mountains, people, sky and Millenium Falcon. It may seem unreal but observing how fast this industry is growing, perhaps we are almost there with such solutions.

Another example of generative approaches are Deep Fakes where some recently presented papers show astonishing results. For example in [2] authors demonstrated how to animate almost any image based on a video from the same category. Below we can see the case where static images of different characters from Game of Thrones are animated based on a short sequence of Donald Trump speaking. The quality of produced images is simply amazing and imagining the plenty of situations where it can be used makes this work even more valuable.

Automation

People from the VFX industry agree that video post production processes consist of many repetitive, manual tasks such as tracking, compositing, animation and rotoscoping. However, with the recent development in Deep Learning, lots of these tasks can be almost fully automated along with huge time and costs decrease. Rotoscoping that is extracting selected objects such as people or vehicles from their background in the video is used in almost any post-production project. This process is so time consuming that it is very often outsourced to cheaper foreign studios, but it still absorbs plenty of time and produces unnecessary costs. Fortunately, there is Deep Learning with all its best. In comixify.ai we developed our own proprietary AI algorithm to make rotoscoping as easy as “Upload arbitrary number of frames, mark the object on the first frame and get results for the whole sequence”. With our tool, to rotoscope any object in any video sequence, the only thing you need is one reference mask for the selected object. AI will do the rest, that is it will understand what object should be rotoscoped and will propagate this info on all the given frames. The flow chart is shown below along with an example video with replaced background.

Can AI replace humans?

Knowing Deep Learning potential it is just a matter of time before AI totally changes the VFX industry. Presented examples are only a drop in the sea of what can be achieved using state-ot-the-art technology. All the boring, manual work will be outsourced to Artificial Intelligence, leaving only the most difficult and creative parts of the visual effects labour for humans. Rapid development that we observe in the field of Computer Vision is sometimes overwhelming but results are so awesome that we cannot wait for more. What a time to be alive!

Sources:

- https://onsetfacilities.com/on-set-ai-for-the-vfx-industry/

- https://www.ibc.org/create-and-produce/how-ai-is-reinventing-visual-effects/4060.article

- https://rossdawson.com/futurist/implications-of-ai/comprehensive-guide-ai-artificial-intelligence-visual-effects-vfx/

- https://seanseattle.github.io/SMIS/

- https://aliaksandrsiarohin.github.io/first-order-model-website/