by Maciej Pęśko

Embedded Computer Vision – OpenCV AI Kit + DepthAI

We have recently got a small Computer Vision toy to play with, namely OpenCV AI Kit. It is a portable, complex device equipped with a camera along with quite considerable computing power to run simple AI models, which gives us a wide range of possibilities to use it in a fairly easy manner. For instance, we can simply create a parking monitoring device for cars and their license plate by using only this one device. The fact that we can easily use it with a library like DepthAI that comes with a quite big number of pre-trained models ready to use on such embedded devices makes it even easier to use and really cool.

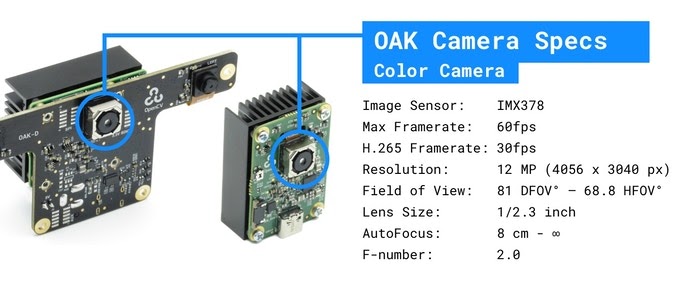

Hardware – OpenCV AI Kit

There are two different options for AI Kit:

– OAK-1 with standard 4056x3040px camera with max 60FPS framerate

– OAK-D with two 1280x800px stereo cameras with max 120FPS framerats

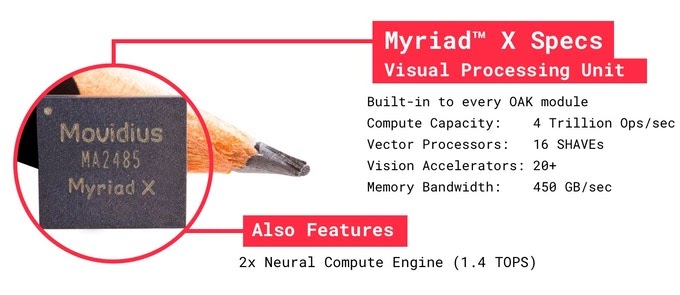

Camera parameters look really good for common use cases for such devices, especially for its price, but now let’s look into the details of the computing power included in it. According to the product kickstarter page each device is equipped with a visual processing unit by Intel. It is Intel Movidius Myriad X featuring 16 programmable shave cores and two dedicated neural compute engines for hardware acceleration of deep neural networks. Sounds promising but we couldn’t help to check it ourselves! If you are interested in more hardware details then full specification can be found in the graphics below or here.

Software – DepthAI pretrained models

The setup of the OpenCV AI Kit is fairly easy. We can use DepthAI library that comes with plenty of pretrained and optimised Machine Learning models including face and landmarks detection, vehicle detection, pedestrian detection, pose estimation, text detection, semantic segmentation, emotions recognition and a few more. It is fairly well documented here. At first glance it seems awesome, so many models in such a small device, but let’s check how fast and accurate they can be on the OpenCV AI Kit. We tested a standard OAK-1 device with several of the included pretrained models and the results are posted below. We presented FPS that the models achieve along with our subjective accuracy opinion based on some example usage we performed.

Tab. 1. Depth AI pretrained models benchmark.

| Pretrained model | Model FPS | Subjective quality opinion |

|---|---|---|

| Face detection | 30 | Good for basic usage, performs really well on front face images that are not too far away from the camera. Sometimes gets lost with rotated faces or faces in profile. |

| Pedestrian detection | 13 | Satisfactory accuracy but the model tends to detect quite a lot false positives (chair, lamp). Perhaps it can be somehow solved by setting a bigger threshold (default is 0.5). |

| Emotions recognition | 30 | It seems to differ only two emotions: “surprised” and “happy”. Sometimes it also detects “anger”, but it doesn’t work well. To get satisfactory results, the face must be right in front of the camera and fulfils ~80% of the screen. |

| Vehicle detection | 14 | Good accuracy for standard cars, slightly worse for motorbikes and extraordinary cars. |

We can see that the quality and speed differs a bit. Taking into consideration the device size and price, some models get really good performance. Particularly face and vehicle detection looks really usable in real cases. Also the speed makes an impression, because models work in real or near-real time.

Software – our own Style Transfer models

However, the review seems to be incomplete by giving only a general view and intuition how useful the AI Kit can be. Nevertheless, as we are very inquisitive by our nature, we were curious how fast this device can be in comparison with different GPUs and CPUs configurations on the same deep learning model. For our luck, with the DepthAI library and the OpenCV AI Kit it is totally possible. We decided to use one of our Style Transfer models, convert it to a proper format (AI Kit requires models to be in a specific OpenVINO format, the detail can be found here) and compare the time performance. The whole process of conversion was a bit complicated and we encountered a few minor and major obstacles. However, we finally got a working model. The results can be seens in the figure below.

Tab. 2. Time [s] comparison with different GPUs and CPUs configurations.

| Hardware | 480×854 | 720×1280 | 1080×1920 |

|---|---|---|---|

| OpenCV AI KIT OAK-1, OpenVINO 2020.1, FP16 | 0.992 s | 2.650 s | 5.618 s |

| Tesla V100 (CUDA 10.1, CUDNN 7),tensorflow 2.3.1, FP32 | 0.023 s | 0.042 s | 0.097 s |

| GeForce RTX 3070 (CUDA 11, CUDNN 8),tensorflow 2.3.1, FP32 | 0.046 s | 0.072 s | 0.204 s |

| GeForce GTX 1650 (CUDA 10.1, CUDNN 7),tensorflow 2.3.1, FP32 | 0.079 s | 0.182 s | 0.395 s |

| Intel Core i7-8750H 2,2 GHz 6-Core + Radeon Pro 555X Coreml 4.0, FP16 | 0.149 s | 0.297 s | 0.639 s |

| Ryzen 5 3600 4.2 GHz 6-Core,tensorflow 2.3.1, FP32 | 0.499 s | 1.217 s | 2.658 s |

| Intel Xeon E5-2686 v4, 2.3GHz, 8 out of 18 cores,tensorflow 2.3.1, FP32 | 0.724 s | 1.647 s | 3.949 s |

| Intel Core i7-8750H 2,2 GHz 6-Core,tensorflow 2.3.1, FP32 | 0.735 s | 1.620 s | 3.572 s |

| Intel Core i7-8750H 2,2 GHz 6-Core,Coreml 4.0, FP32 | 0.783 s | 1.723 s | 4.316 s |

| Intel Core i5-5200U 2.2 GHz 4-Core,tensorflow 2.3.1, FP32 | 1.789 s | 3.759 s | 8.830 s |

We tested the same Style Transfer model on different hardwares including several CPUs and GPUs that we had access to. Tested resolutions were 480×854, 720×1280 and 1080×1920. All results are given in seconds. As we can see, OpenCV AI KIT is comparable to benchmarked CPUs. It is slightly slower than Intel Core i7 (On both tensorflow and coreml model versions), twice slower than Ryzen 5 and twice faster than Intel Core i5. It is also much slower (around 5-6 times) than the coreml version run on both CPU (Intel Core i7) and GPU (Radeon Pro 555X 4 GB) on MacOS. All benchmarked GPUs were an order of magnitude faster than OpenCV AI KIT which obviously was the expected result.

Low price, many applications

All in all, the product is fairly cheap and works really well in simple cases like face/pedestrian detection. It certainly can be used in some applications like automatic license plates reading or counting people in some areas. On the other hand, the time performance in more complicated cases like our stylization models is not enough to ensure satisfactory framerate on larger resolutions like 720p or 1080p. Perhaps after spending some time on optimization it could work faster.