Give an Imp a couple mice and it’ll paint whatever you like! everyone knows that… everyone knows that!… Lady Sybil Ramkin The Watch TV Series, season one episode six “The Dark in The Dark”

The TV Series The Watch produced by BBC America is now aired and we are proud to see our tech being used in it! In fact, and there is no other way to say it, we are the Imps as our tech was used to help the postproduction process in a recreation of hand-drawn, Imp made, animation sections. Our Style Transfer was used by Lola Post Production, currently delivering over 650 shots as the VFX vendor of The Watch inspired by the characters created by Sir Terry Pratchett Discworld novel, for specific scenes redrawn into an animation. We are extremely proud to work with one of the most respected and reliable independent VFX studios in London especially on BBC America produced TV series! And the effects of this cooperation might be seen in two episodes of season one!

What is Style Transfer?

In short, Style transfer gives you an opportunity to apply different artistic and comics filters to your videos. The only thing you need to do is to upload your video or frames to the VFX Platform and pick a style. It may sound familiar, as we have already used similar technology in our POC website, comixify.ai. Accessible through our SaaS platform, Style Transfer can be used for converting any video or still frames into an animation.

Depending on the style you will choose it can be gentle to your video (gray-haired Wizard above) or can move further to the point when your video is closer to the cartoony animation (two travelers on the desert, below).

The beauty of our tech is that we can also develop any style on demand. We can pick it up from existing materials,

or build it in cooperation with a cartoonist. Such a cooperation allows us to create any style you can dream of, starting with 10 hand drawn images!

Style Transfer – How it Works!

The Technical side of Style transfer was mentioned by us in a number of articles. The one that describes the whole process best is Can Undone be done differently? by Paweł Andruszkiewicz. In essence, if you are short in time, Style Transfer was “[…]Firstly introduced a couple of years ago by L. Gatys et al. in the publication called “A Neural Algorithm of Artistic Style”. Style Transfer has come a long way from a very slow optimization-based technique to the most recent methods based on state-of-the-art Machine Learning models. In comixify.ai we developed our own proprietary Style Transfer technology based on Deep Learning Generative Adversarial Networks. Our method is able to clone a style of any artist represented by just a few representatives painted or drawn samples and then apply it to any video sequence with outstanding quality.

We train our Machine Learning models to produce similar stylized images automatically, by using our own proprietary Generative Adversarial Network-based method. To be more precise, we train two networks Generator (actual stylization model) and Discriminator (a subsidiary model that aims to differentiate if a sample is produced by Generated or drawn by an artist). Such a pair of Neural Networks, trained simultaneously, is the core of our training pipeline. Additionally, we also show ground truth samples (prepared by the artist) to the Generator to enhance the training process and make it much faster. […]”

The VFX Platform

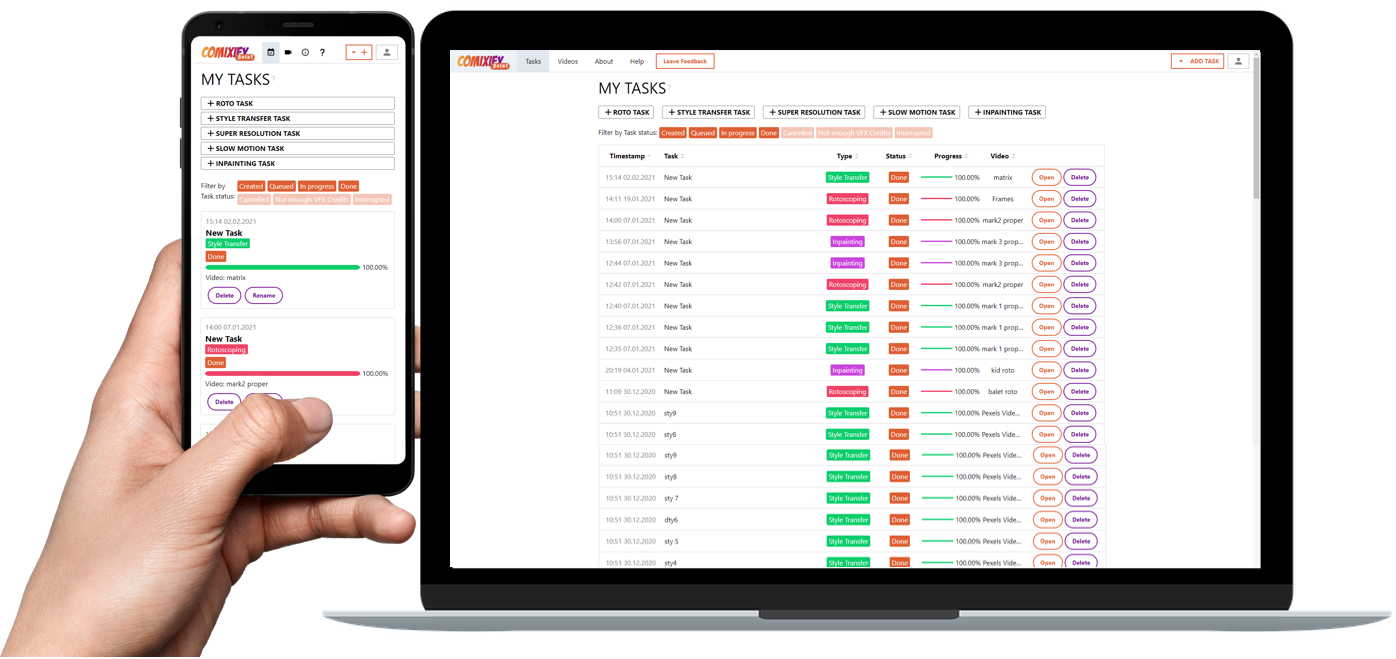

Our VFX Platform, used by Lola Post Production to convert the original frames into an animated version, is now accessible for anyone for free to use in the Open Beta model! You can test much more than mentioned Style Transfer.

The current version of VFX Platform offers:

Style Transfer

As described above an amazing and easy to use tool that can do… magic!

Rotoscoping

Roto allows users to segment any object in the video scene with pixel-wise accuracy. Our model will recognize the object, based on the given reference frames, and find its position in the whole sequence with astonishing accuracy. As a result, users will get a bunch of masks for all the frames in the video along with Silhouette splines files ready to upload to most roto editing software.

Super Slow Motion

The technology underneath is designed to estimate almost unlimited more FPS from input video. In essence AI increases FPS rate making the video smooth even when slowed down 10 times. Our tech can do it in minutes and what is more, artifacts in the whole video arec almost unnoticeable.

Super Resolution

There are thousands of hours of materials recorded in 480p or 720p. As such these often cannot be used during video production because standards are 4K and 8K. Luckily, our Super Resolution module can help with that. The Deep Learning model behind our service is trained to understand the content of the provided video to enhance the details frame by frame across the whole sequence. It was trained on the huge dataset of different video scenes to make it as versatile as possible hereby it allows one to sample any video sequence up to 8K with an increasing level of detail.

Inpainting

The Deep Learning model behind our Inpainting service is trained to understand the position of the unwanted object in the context of the whole sequence. It was trained on the massive dataset of different video scenes to make it as versatile as possible thus it allows one to remove almost any object from the video sequence.

VFX Platform Open Beta

As we are still developing our tools to become faster and better we are now allowing users to login to our VFX Platform Open Beta and test the tech for free! On creating an account you will get 5000 free VFX Credits to use and test all our modules. You can use the Style Transfer or maybe you want to test Inpainting, it is your call!

Have fun and let us know what we could do better!

Oh! One last disclaimer… We know that we did Imp work here, and we are open to many forms of remuneration but mice… is not one of them.